Towards Moore's Law Software: Part 1 of 3

(Disclaimer: I’ve been on a mental journey these past four weeks thinking about how mainstream programming practices are not very expressive. This problem leads to a lot of bloated code that buries its core ideas. In this three part series, I try to highlight a few lesser-known ideas that some smart guys are working on and show how we might realistically get to a much better place. I give the background in parts 1 and 2 and then make some conclusions in part 3. If you’re pressed for time or don’t want to read much, stop here and go to the third part. However, if you have the time, the third part will make more sense if you read these first two parts.)

Six times more code. I turned in my exam and walked out of the room in shock.

The last question on my programming languages class midterm in the spring of 2004 blew me away. Part one asked us to write a function to swap items in a list based off a function using Scheme and part two had us write the equivalent code in Java. My Scheme version took up about a fourth of the page where the Java version took a page and a half. Even today, with the latest version of C# that has many new toys that were missing from Java at the time, such as lambda functions, I probably couldn’t cut the ratio below three to one.

I’ve often reflected back on the midterm and that class. It was the first real time I was exposed to programming languages that weren’t more or less the same style that I had been used to. I didn’t really understand how different the other languages were. My ego also took a hit when I found myself struggling for a few weeks to get up to speed on functional languages like Scheme and ML. It was like being placed in a foreign land without knowing the local language. As I started to adjust, something interesting happened. I began to see honest differences in how I thought about programming problems.

The whole experience led me to finally to conclude that there is no single perfect universal programming language. Until I came to that point, I wasted a lot of time thinking that GW-BASIC QBASIC QB 4.5 VB4 Delphi Java C++ C# 1.0 was the only language I would ever need. If I were smart, I’d realize that 10 years from now, there’s a good chance that my day to day language won’t be C# since 10 years ago I was happily writing Delphi 2.0 code.

I think the most important lesson that midterm question taught me is that the language you pick for a specific problem makes a notable difference. Plato expands on this when referring to good design:

“First, the taking in of scattered particulars under one Idea, so that everyone understands what is being talked about… Second, the separation of the Idea into parts, by dividing it at the joints, as nature directs, not breaking any limb in half as a bad carver might.” Plato, Phaedrus, 265D.

Indeed, it’s important to pick a language that divides the problem “at the joints, as nature directs” instead of forcing you to be “a bad carver.”

Admitting There is a Problem

More recently, I had a discussion with Alan Kay, one of the founders of object oriented thinking. Like a teenage fanboy backstage at a popular rock band wanting an autograph, was I wanting object oriented design help from him. I told him that I was having problems making a piece of code I was working on elegant because the language and other design patterns being used were adding too much “goo.” I was thinking he’d have some witty pattern advice or something else.

His response was classic: “You ‘avoid the goo’ by avoiding the goo.”

This was such a profound statement to me that it’s taken me four weeks to begin to understand what he meant. There are times when I get so entrenched in “coping” with the language and its shortcomings that I fail to see that there is a much better attack vector that finds the problem’s natural joints. I often think I am far too pleased with my current tools. It doesn’t help that many of the blogs that I read have similar feelings. The problem is that they don’t force me out of my comfort zone to dare to think different.

A discomforting side effect is that it I might not even realize that I’m not dreaming big enough. J.C.R. Licklider, a key person that led to the creation of the Internet, specifically referred to his network idea as the “Intergalactic Network” because he wanted to get people to think really big thoughts instead of cornering themselves into “safe” solutions. This is the kind of kick in the pants that I need from the founders of modern computer science.

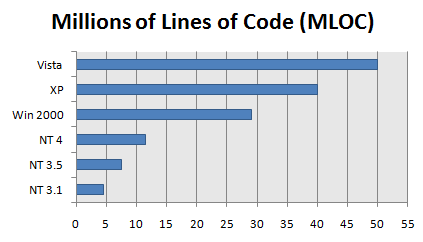

We’ve done some good software engineering over the past few decades, but the result is usually a system with lots of code. For example, take Windows:

As you can see, Vista is huge at over 50 million lines of code. This is just the operating system, and doesn’t account for many other applications we use on a daily basis. I’m really not picking on Microsoft; I happily use Vista on my home and work machine. Linux, like many other open source projects, has a hefty amount of code as well.

Is software really that complicated?

Does it really have that many interesting ideas that are inherently complicated to warrant millions of lines of code? Even if “lines of code” is a poor way of measuring code complexity, a single person can’t even hope to read that many lines, let alone understand them.

Is it big because it has to be big or is it big because we, as an industry, have been bad “carvers?”

Back to Simplicity

Brevity is hard to achieve, but it often pays off. The less code I write means there is less room for bugs to get in. Thus, it seems that in programming, as in mathematics, beauty is measured per word.

Brevity is hard to achieve, but it often pays off. The less code I write means there is less room for bugs to get in. Thus, it seems that in programming, as in mathematics, beauty is measured per word.

Most programs are just one or two core, sometimes beautiful, ideas. The problem is that there is so much scaffolding “goo” around them that they get obscured.

I remember when I learned of how Ethernet allows multiple computers to share a single wire. When two or more computers try to “talk” on the wire, they each notice this “collision” and then they back off from transmitting and then… they wait a random amount of time before they transmit again. When I was in school and first understood this core idea, I was impressed with how elegant it was. It sort of works in the same way that people find their turn to talk at a dinner table.

Another program that has a beautifully simple core idea is the “traceroute” program. It tells you what path your data travels along in order to get from your computer to a destination computer. Each packet that your computer sends has a time to live (TTL) number on it. As the intermediary routers pass that packet to another router that’s (hopefully) closer to the destination, that number gets decremented by 1. If that number ever hits zero, the packet gets dropped and note is sent back to its sender with a message saying something like “Hi, this is router XYZ, and I’m sorry to report that your packet died in transit because there were too many hops between you and where it wants to go.” The beautiful idea is that traceroute takes advantage of this “error” message. It puts a TTL of 1 on the first packet it sends out and records the router that gives its condolences. Then a TTL of 2, then 3, and so forth until it finally gets a confirmation that the packet arrived at its destination. It’s beautifully simple, but this idea is obscured by the hundreds of lines needed in a traditional implementation of it.

Maybe we write so much code to make others think the ideas are really hard or something. It’s unfortunate that we do this because it scares off some really bright kids away from our profession. Many of the algorithms that we use on a daily basis can easily be performed by young kids.

Take sorting.

Sorting algorithms as we typically learned them in school usually take awhile to understand. However, there is a much better way to teach them. I had the privilege to meet Tim Bell, a CS professor at the University of Canterbury, who has helped develop a set of activities that teach children how to learn many core ideas of computer science without even needing a computer.

Sorting algorithms as we typically learned them in school usually take awhile to understand. However, there is a much better way to teach them. I had the privilege to meet Tim Bell, a CS professor at the University of Canterbury, who has helped develop a set of activities that teach children how to learn many core ideas of computer science without even needing a computer.

Since kids can often understand the ideas behind our code, maybe we write so much code because it seems to offer job security. I don’t really care to make things more complicated than they absolutely have to be. If an average 8 year old can understand my code, I’ll consider that a high compliment. Really!

I’d like to express a pure thought without needing so much syntax scaffolding goo. I want to write code that is so simple that just looking at it makes it very obvious what it does and leaves so little room for error that it’s practically bug free just as a result of being so.. simple.

And by simple, I don’t mean using tricks. I’m tired of seeing people in demos click “next, next, next, finish” and having a wizard generate hundreds of lines of code that you might need to tweak. And by short, I don’t mean doing bad things like removing the white space and putting everything on one line or any using other types of tricks. When I say simple, I mean it’s a simple thought.

It’s imperative to keep things simple when you write them because the code is often read many times. Ruby’s creator, Matz, writes:

“Most programs are not write-once. They are reworked and rewritten again and again in their lives. Bugs must be debugged… During this process, human beings must be able to read and understand the original code; it is therefore more important by far for humans to be able to understand the program than it is for the computer.”

Humans are the audience. Stop trying to make things nice for the compiler. It’ll understand regardless. Write for the human. It’s usually only the ideas that the code represents that are beautiful, so the language shouldn’t obscure them too much. At the very least, make your code habitable.

Going Meta

Ok, ok; enough ranting that code can be ugly. How do we get better?

Unfortunately, this is difficult to answer for a guy like me to answer because I’ve been so entrenched in the typical way. It’s sort of like Henry Ford coming to me before he invented the Model T and asking what America needs for transportation. I would have probably replied: “a faster horse is all we need!” So having acknowledged my weaknesses, I’ll look to others that are far smarter than me who are looking into concepts like metaprogramming.

One such guy is Charles Simonyi, who was lead architect on what became Word and Excel. Before immersing himself in the land of office documents and spreadsheets, he was a PhD student at Stanford. He wrote his PhD thesis on “Meta-Programming: A Software Production Method” and the idea has been in his mind ever since. In the 90’s, he moved on to Microsoft Research and while there wrote “The Death of Computer Languages: The Birth of Intentional Programming.” The idea behind Intentional Programming (IP) is that your source code, be it graphical or text, “talk[s] to machines as little as possible. Instead, [you] would concentrate on capturing the intentions of computer users.”

Charles really was enamored by the idea of IP and desperately tried to convince Microsoft that it was the direction the company should take for future programming language development. Think about what must have gone through Microsoft’s top level management’s mind. It was probably something like “that’s really cool Charles, but we can’t really make such a disruptive change right now, you see, we’ve been funding this ‘.net’ project for awhile that’s a bit more conservative than what you’re suggesting, and it’s much closer to being shipped… how about you work on making that nice instead?”

He quit.

UPDATE: Part two is now available.